What is a CLIP Interrogator?

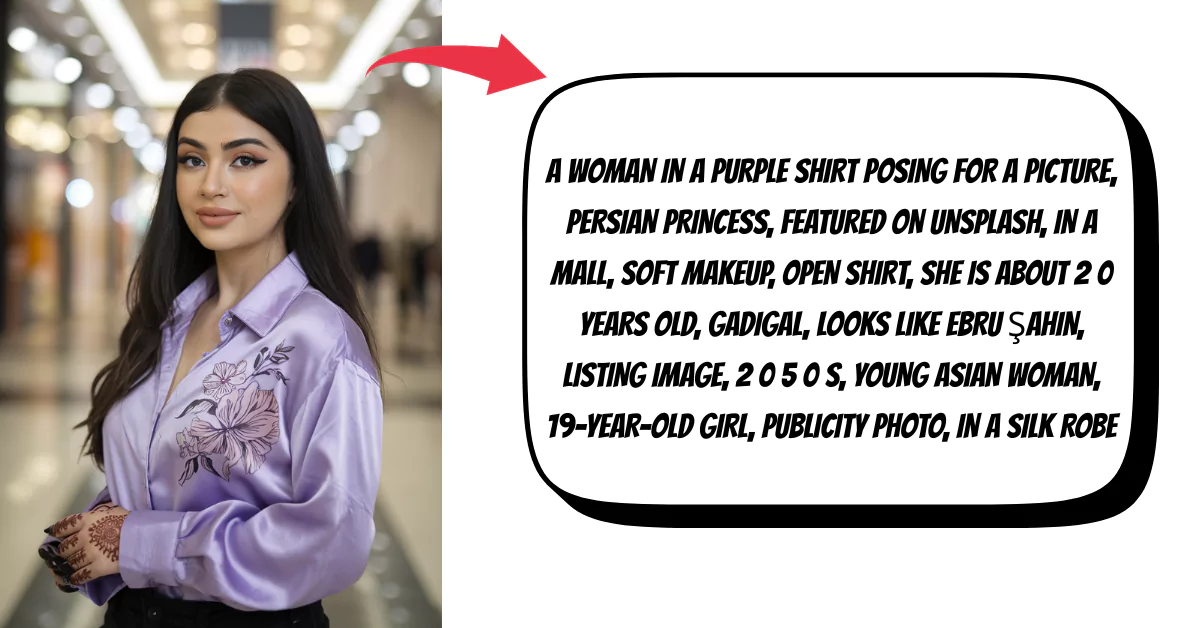

The CLIP Interrogator is a powerful tool that leverages the CLIP (Contrastive Language–Image Pre-training) model to analyze images and generate descriptive text or tags. It effectively bridges the gap between visual content and language by interpreting the contents of images through natural language descriptions.

Available on Hugging Face, the CLIP Interrogator is a user-friendly application developed by pharmapsychotic. It uses the CLIP model to provide relevant text descriptions for images, making it an invaluable resource for those looking to understand or replicate the style and content of existing images.

This tool is particularly useful for identifying key elements in images and suggesting prompts for creating similar imagery, aiding users in both creative and analytical tasks.

Key Features of CLIP Interrogator AI

Image Analysis

CLIP Interrogator uses the CLIP model to analyze images and generate descriptive text or tags, bridging the gap between visual content and language.

Prompt Generation

The tool is particularly useful for suggesting prompts to create similar images, aiding users in both creative and analytical tasks.

User-Friendly Application

Available on Hugging Face, the CLIP Interrogator is a user-friendly application developed by pharmapsychotic, making it accessible for a wide range of users.

How Does the CLIP Interrogator Work?

Base Caption Generation

Use the BLIP model to create an initial caption for the image. This gives a general description of what's in the image.

Enhancement with "Flavors"

Adds specific phrases, known as "Flavors," to the base caption. These phrases cover various categories like objects, styles, and artist names.

Matching with CLIP

Uses the CLIP model to match the image with the most fitting phrases from the "Flavors". This ensures the final text is more detailed and closely aligned with the image's content.

Application

The enriched text descriptions are especially useful for generating prompts for AI image generators, providing a deeper understanding of the image's elements.

CLIP Interrogator Models

BLIP Model

BLIP (Bootstrapped Language Image Pretraining) focuses on generating a basic, initial caption for an image. It's designed to provide a general understanding of what the image depicts, creating a simple and straightforward description. This serves as the foundation for further analysis.

CLIP Model

CLIP (Contrastive Language–Image Pre-training) takes the basic description from BLIP and enhances it. It compares the image with a variety of predefined phrases to add more details to the description. This process ensures that the final text is much more detailed and closely aligned with the specific content and context of the image.

OpenCLIP Model

OpenCLIP is designed to maintain the core functionality of the original CLIP model, which involves understanding and interpreting images in the context of natural language.

Pros and Cons

Pros

- Bridges visual and language

- Generates descriptive text

- Uses advanced CLIP model

- Effective image analysis

- Web-based application

Cons

- Requires internet access

- Limited to CLIP's scope